All European languages are encoded in two bytes or less per character It uses between 1 and 4 bytes per character and it has no concept of byte-order. UTF-8 uses 1 byte to encode an English character.

There are several Unicode formats: UTF-8, UTF-16 and UTF-32. This is where the Unicode Standard comes in.Įncoding is always related to a charset, so the encoding process encodes characters to bytes and decodes bytes to characters. When the entire world practices the same character encoding scheme, every computer can display the same characters. Here is where international standards become critical.

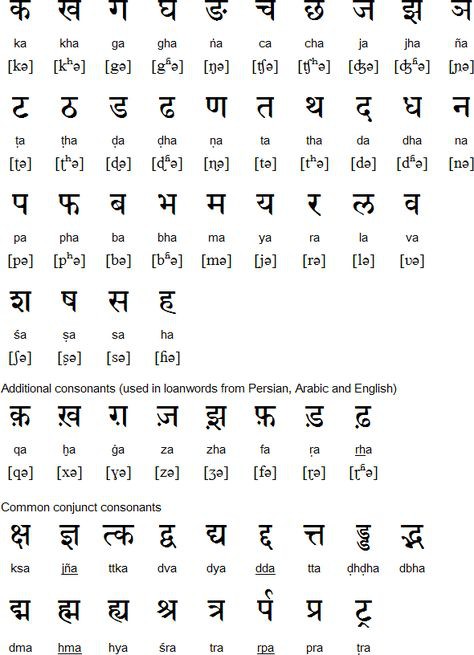

Having encoding schemes of different lengths required programs to figure out which one to apply depending on the language being used. As a result, other languages required different encoding schemes and character definitions changed according to the language. While ASCII encoding was acceptable for most common English language characters, numbers and punctuation, it was constraining for the rest of the world’s dialects. Here is the result set of char to ASCII value:

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed